Retries with resilience4j and how to check in your Real World Environment

Do you know Resilience4j? You definitely should, if you like to build fault tolerant applications. This blog post is about the retry mechanism and how to check its functionality in real world environments.

Today we want to have a look at Resilience4j. It is super easy to use with Spring Boot and helps you to build more resilient applications. In the easiest case, you only need to add some annotations to your code and you are done.

Our Example System

In our example, we want to implement a retry in our famous online shopping demo. The demo consists of a Gateway microservice which provides a REST endpoint (/products) to deliver various products to a shop-frontend. Since the Gateway is stateless, it fetches all products directly from other microservices (Hot-Deals, Fashion and Toys) in a synchronous way.

The Gateway is using a service which handles the calls to the three backends delivering products.

Controller

@GetMapping("/products") public Products getProducts() { Products products = new Products(); products.setFashion(this.service.getFashion()); products.setToys(this.service.getToys()); products.setHotDeals(this.service.getHotDeals()); return products; }

Service

public List<Product> getFashion() { return this.restTemplate.exchange(this.urlFashion, HttpMethod.GET, null, this.productListTypeReference).getBody(); }

This is what a simple implementation using the Spring Framework with the RestTemplate could look like, but it has a major flaw in it: If the rest-call to the fashion microservice throws an exception, the whole request will fail and return an error response.

Adding the Retry

To solve this issue, we want to provide some fallback data when an exception is thrown in each of three retries. To achieve this, we can add a single resilience4j annotation to the service method like this:

@Retry(name = "fashion", fallbackMethod = "getProductsFallback")

public List<Product> getFashion() {

...

}

and add the fallback-method.

private List<Product> getProductsFallback(RuntimeException exception) {

return Collections.emptyList();

}

By default, resilience4J will now try to call the annotated method three times with a wait duration of 500ms between the single calls. If there is no successful invocation, resilience4j will call the fallback method and use its return value. But be careful: You want to make sure that the retried operation is idempotent; otherwise you may end up with corrupted data.

Ready to Test

You can implement a test using @SpringBootTest to check the desired behavior. An example can be found here. But wouldn’t it be cool to see the effects in your real world environment?

Due to backoff and retries, a Gateway in a real world environment will take more time to process requests than usual. This may impact the caller site and overall performance. That’s why it’s important to test this in an integrated environment under load:

- Make sure that the slowed down requests do not exceed the caller’s timeouts (or the fallback would be useless).

- Also verify that thread pools, memory or other resources aren’t exhausted because there may be more concurrent requests in your system as each request takes more time to process.

That’s why we are using Steadybit to have a closer look and implement the following experiment.

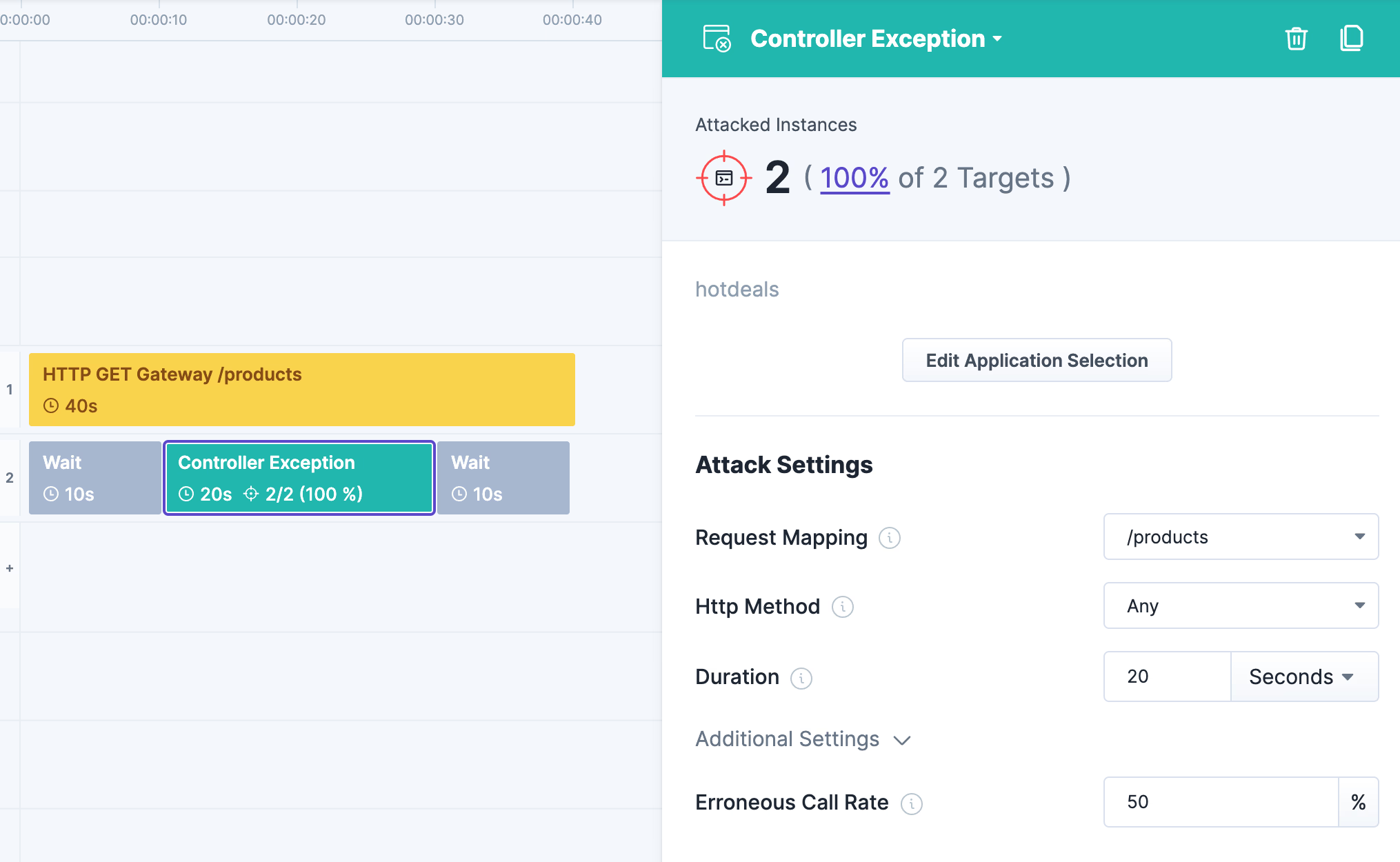

Experiment Design

- We will check the results of /products for 40 seconds

- After 10 seconds, we will introduce exceptions into both instances of the hotdeals backend. 50% of the calls to their /products endpoint should fail.

- After that, we will wait another 10 seconds to ensure the application is recovering from the attack.

Experiment Result with Unmodified Shopping Demo

First, we run the experiment on our unmodified shopping-demo. The results are obvious, the gateway-endpoint is returning 50% HTTP 500 as long as the attack is running. The experiment fails.

Experiment Result with @Retry

Now, let’s try deploying our modified version with the @Retry. The results are much better. You can see three shapes of response times: some around zero milliseconds, some around 500 milliseconds, and some around one second. That’s the impact of the 500 milliseconds wait duration between the retry calls. All responses have a HTTP 200. The experiment completed successfully.

Reviewing the Metrics

If you enabled Spring Boot Actuator Endpoints for Metrics, you can also check them. Resilience4j publishes some nice metrics.

For example: /actuator/metrics/resilience4j.retry.calls?tag=name:hotdeals&tag=kind:successful_with_retry return the following result:

{ "name": "resilience4j.retry.calls", "description": "The number of successful calls after a retry attempt", "baseUnit": null, "measurements": [ { "statistic": "COUNT", "value": 28 } ], "availableTags": [] }

Conclusion

Resilience4J is a very simple framework to apply some basic fault tolerance mechanism to your application. It’s definitely worth a look. The simple @Retry will protect our shop-frontend from unavailable backends and HTTP errors.

Next Step

As the result show, our implemented retry-mechanism dramatically increases the response time and adds additional load on the 3 backends, especially when they are having problems. This could lead to other problems in your distributed system, which is why you should think about the use of a CircuitBreaker. In my next post, I’ll describe the use case of Resilience4J’s CircuitBreaker and how to test it with Steadybit.

Get started today

Full access to the Steadybit Chaos Engineering platform.

Available as SaaS and On-Premises!

or sign up with

Book a Demo

Let us guide you through a personalized demo to kick-start your Chaos Engineering efforts and build more reliable systems for your business!