Who Needs GameDays? Resilience Testing using Testcontainers

As you may agree, it is important to test your code. The resilience patterns implemented in your application are code (even if they’re just a bunch of annotations). Often I saw that this is not tested thoroughly or not at all.

With Testcontainers writing integration tests has become a commodity. We envision the same for resilience tests, so Steadybit open sourced a small library built with the knowledge from our Chaos Engineering platform to help you write resilience tests with minimal effort and without installing extra software on your servers. The library is available on Maven Central and Github for everyone. In this Post we will add some resilience tests to a sample application using it.

The Sample Application

Before diving right into the resilience tests, we need to set up the stage:

We have a sample application processing orders (Github). Each time the user completes an order, a message is sent to a message queue from where it is picked up by the shipping application.

For the order application we already test (using Testcontainers) that a message is send for each order created:

@Test

void should_create_order() {

var orderId = http.postForObject("/orders", CREATE_ORDER_BODY, JsonNode.class).get("id").asLong();

var event = (OrderCreatedEvent) jms.receiveAndConvert("order_created");

assertThat(event.getId()).isEqualTo(orderId);

}

Prerequisites

For writing the resilience tests we need to include our library to the test dependencies. Add this to your Maven pom.xml:

<dependency> <groupId>com.steadybit</groupId> <artifactId>steadybit-testcontainers</artifactId> <version>1.0.0</version> <scope>test</scope> </dependency>

Adding Our First Resilience Test

A requirement of our application is that each request must succeed within two seconds.

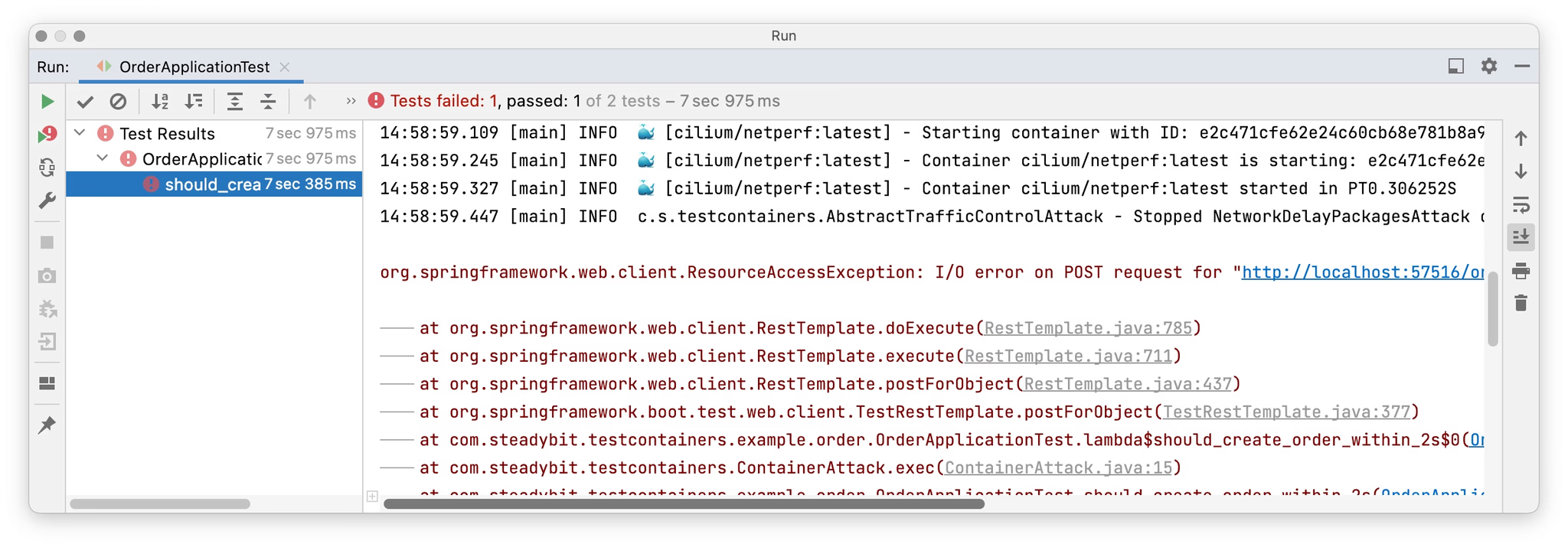

As our application depends on the message broker, we have to figure out what happens when the broker is not responding in time. For this we can use the Steadybit Testcontainers library to inject some latency and set a timeout for our TestRestTemplate to two seconds. This test is using our networkDelayPackages attack and looks like this:

@Test void should_create_order_within_2s() { var orderId = Steadybit.networkDelayPackages(Duration.ofSeconds(2)) .forContainers(broker) //the container to attack .exec(() -> { //This code will be executed while running the delay attack return http.postForObject("/orders", CREATE_ORDER_BODY, JsonNode.class).get("id").asLong(); }); var event = (OrderCreatedEvent) jms.receiveAndConvert("order_created"); assertThat(event.getId()).isEqualTo(orderId); } @TestConfiguration static class TestConfig { @Bean public RestTemplateBuilder restTemplateBuilder() { return new RestTemplateBuilder().setReadTimeout(Duration.ofSeconds(2)); } }

When we execute the test, we will see that the HTTP call will exceed the timeout.

One solution to this problem is to decouple message sending from processing of the HTTP request – for this we can use Spring’s @Async annotation:

@Async

public void publishOrderCreated(Order order) {

this.jmsTemplate.convertAndSend(destination, toEvent(order));

log.info("Published order {}", order.getId());

}

By dispatching the message from a different thread we have changed the behavior – especially when the operation completely fails (e.g. due a network partition or an unavailable broker): The HTTP request will be successful, but the message may not be sent.

Some message broker clients could send those messages later – but the unsent messages are often held in-memory, which may cause an OutOfMemoryError when the error persists for a longer period. On top of this those messages are completely lost when the application restarts.

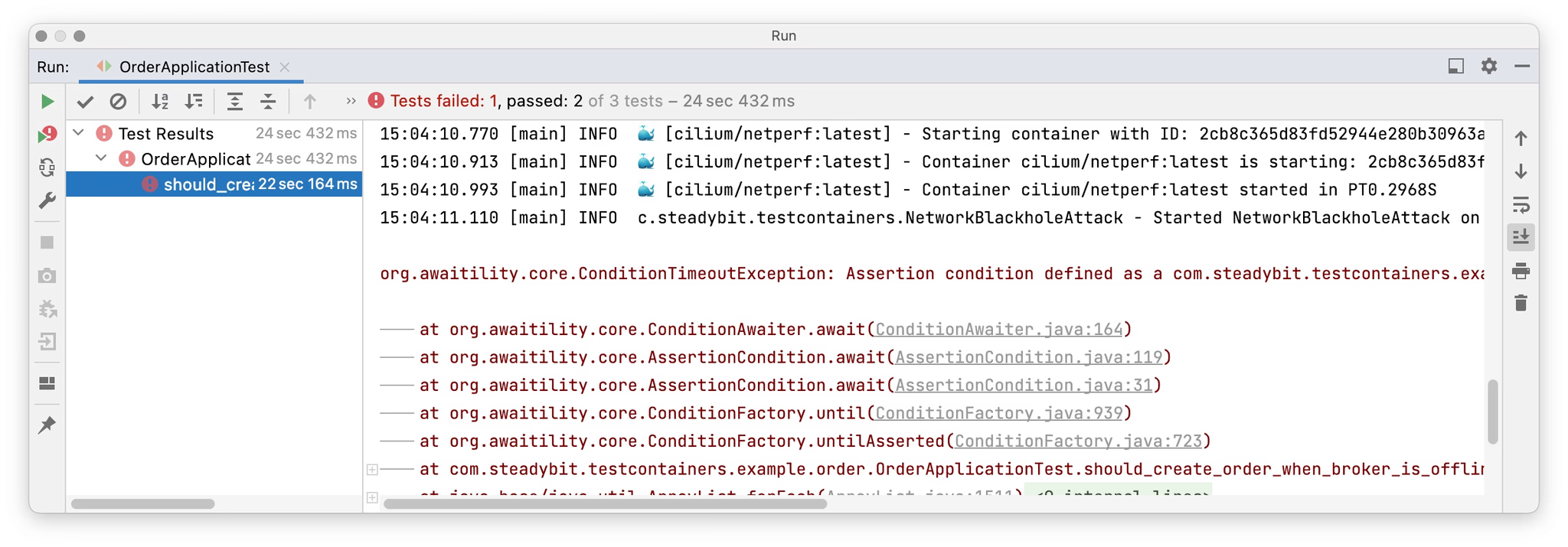

To verify this behavior we add a second resilience test to figure out what happens when the broker is not reachable (using the networkBlackhole-attack):

@Test void should_create_order_when_broker_is_offline() { var orderId = Steadybit.networkBlackhole() .forContainers(broker) .exec(() -> { var id = http.postForObject("/orders", CREATE_ORDER_BODY, JsonNode.class).get("id").asLong(); //we need to wait for the exception while executing the attack asyncUncaughtExceptionHandler.waitFor(UncategorizedJmsException.class); return id; }); await().untilAsserted(() -> { var event = (OrderCreatedEvent) jms.receiveAndConvert("order_created"); assertThat(event).isNotNull(); assertThat(event.getId()).isEqualTo(orderId); }); }

Unsurprisingly the test fails because the message is lost and never sent.

So how do we fix this problem?

Step 1) We add a little bookkeeping to the orders indicating whether they were published and sent to the message queue:

@Async

@Transactional

public void publishOrderCreated(Order order) {

this.repository.markAsPublished(order.getId(), Instant.now());

this.jmsTemplate.convertAndSend(destination, toEvent(order));

log.info("Published order {}", order.getId());

}

When sending fails, the transaction is rolled back and the order will not be marked as published.

Step 2) For reconciliation, we add a `@Scheduled` task that will send all unpublished orders.

@Scheduled(fixedDelay = 5_000L)

@Transactional

public void publishPendingOrders() {

for (Order order : this.repository.findPublishPending()) {

this.publishOrderCreated(order);

}

}

With those changes our resilience tests will now succeed and our application remains functional when the message broker fails.

Conclusion

As you can see, writing tests for resilience doesn’t need to be hard. The feedback cycle is shortened and it doesn’t need a GameDay to figure out that you have broken one of your resilience measures.

Try it on your own and include the library to your project and add some resilience tests for your application.

Not using Testcontainers or you have somewhat more complex scenarios to test? Try out our Steadybit platform right now.

Is this the death of Chaos Engineering? Cancel all your GameDays?

Certainly not. Today, most applications consist of multiple different services and deployment units and have properties emerging from their (microservice) architecture which are hard to test with an integration test for a single unit.

The resilience tests using testcontainers can dramatically shorten the feedback cycle but have different objectives then a GameDay. The tests are to disclose errors, to protect your future-self against regression. Whereas GameDays can be used to replay production incidents, to explore possible scenarios or to get you more routined with errors and incidents.

Get started today

Full access to the Steadybit Chaos Engineering platform.

Available as SaaS and On-Premises!

or sign up with

Book a Demo

Let us guide you through a personalized demo to kick-start your Chaos Engineering efforts and build more reliable systems for your business!