How to Survive an AWS Zone Outage

The use of cloud services, such as AWS, Azure or GCP, helps us to make software available to our customers in a short time. The resources can be used on demand and are often more cost-effective than hosting your own data center. But a cloud has some special characteristics that need to be considered. For example, individual components may be scaled dynamically and a single server may be replaced by a new server or similar.

To put it in the words of the Amazon CTO:

"We need to build systems that embrace failure as a natural occurrence."

AWS offers several services and concepts to help build resilient systems. To that end, today we’re going to look at the concept of regions and zones, and consider what our architecture needs to look like to survive the failure of one.

What are regions and zones?

Regions and Availability Zones (AZ) are powerful concepts to build highly available applications. Each region has multiple availability zones, where each zone is one or more discrete data centers with separate power, network and connectivity. So if an application is distributed across AZs, they are better isolated from each other to be protected from physical disasters such as ice storms, floods, earthquakes and more.

Prerequisites

- Application deployed in multiple AZs (If you have no application deployed you can use our demo application and spin up an AWS EKS cluster with a few commands documented here). The EKS setup provides a ready to use cluster with 2 nodes.

- A tool to simulate an AZ outage. We use steadybit for that in our example, but you can also use any other tool that is capable of doing that. The AWS Well-architected Labs provides also an example for running an az outage.

Create Experiment

Before we start with our experiment we need to consider a few things. Looking at the Principles of Chaos, we should first determine the steady state of the application to later determine if our experiment was successful. The formulation of the hypothesis helps with this. For our simple example, we could define the following hypothesis:

"When the availability zone us-east-2a” goes down, the product service still returns a product list with no issues."

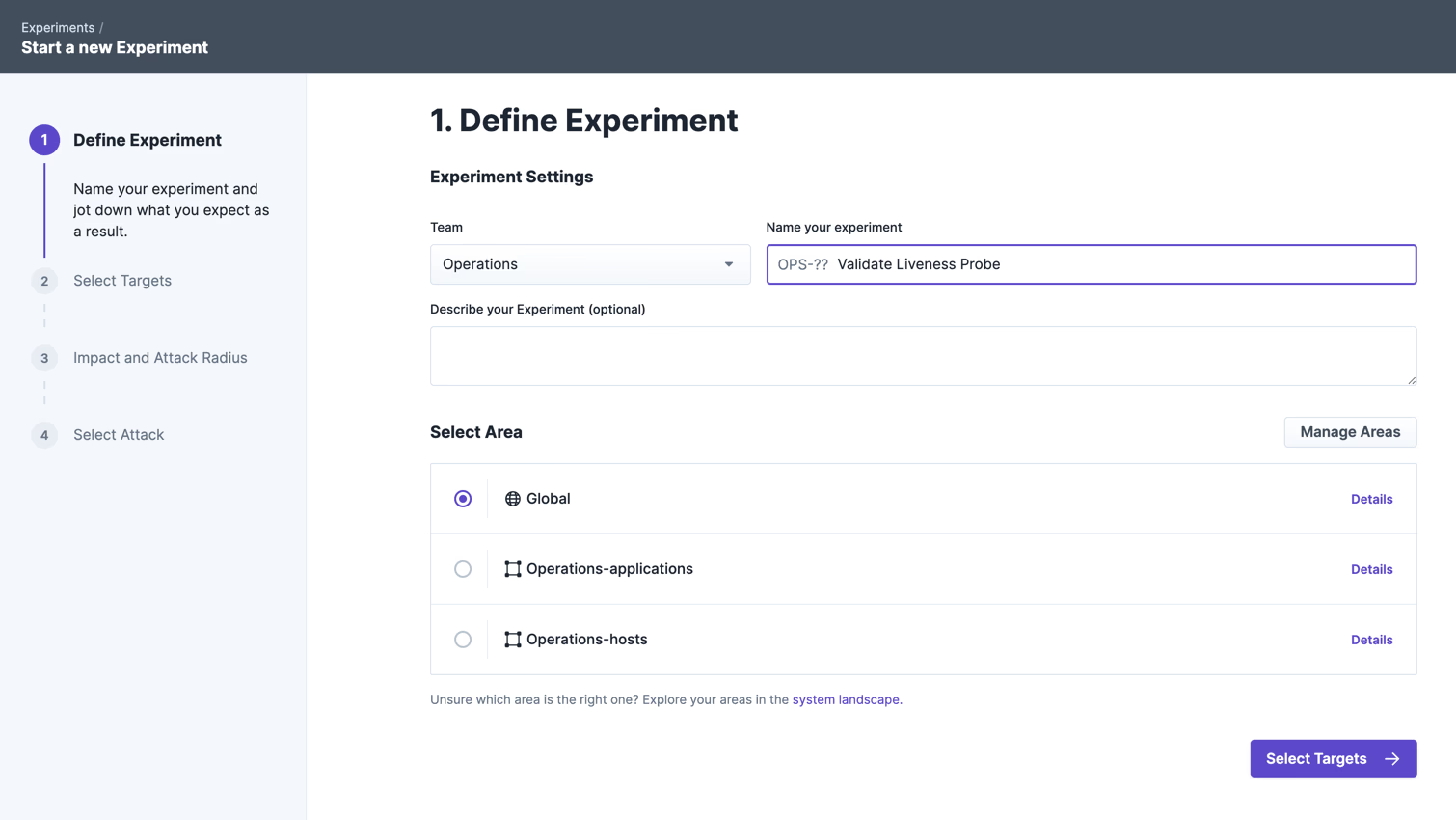

In the next step, we want to create and run the experiment with steadybit. To do this, we first assign a meaningful title and also directly enter the formulated hypothesis in the field provided, in order to know later exactly what was actually tested in this experiment.

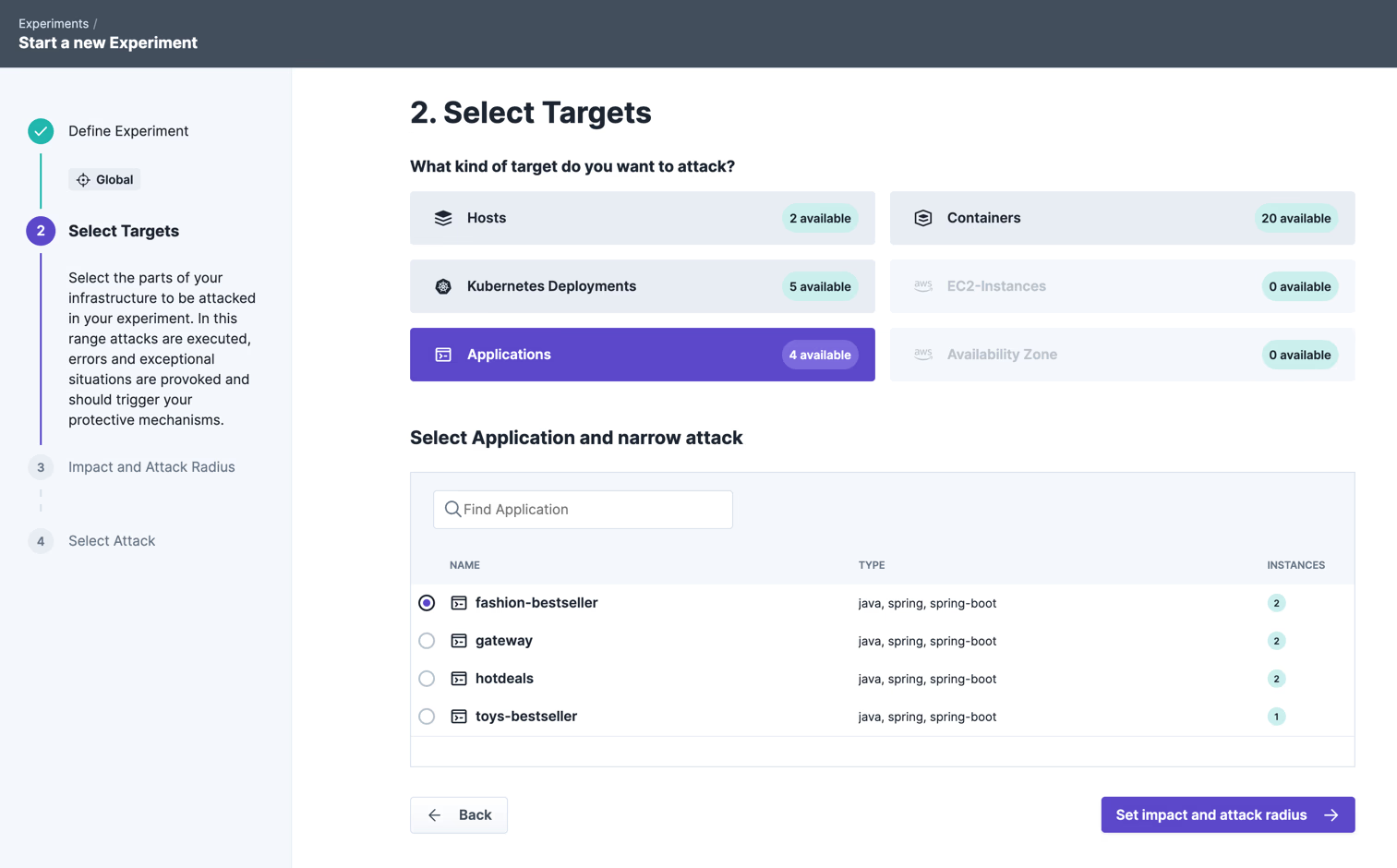

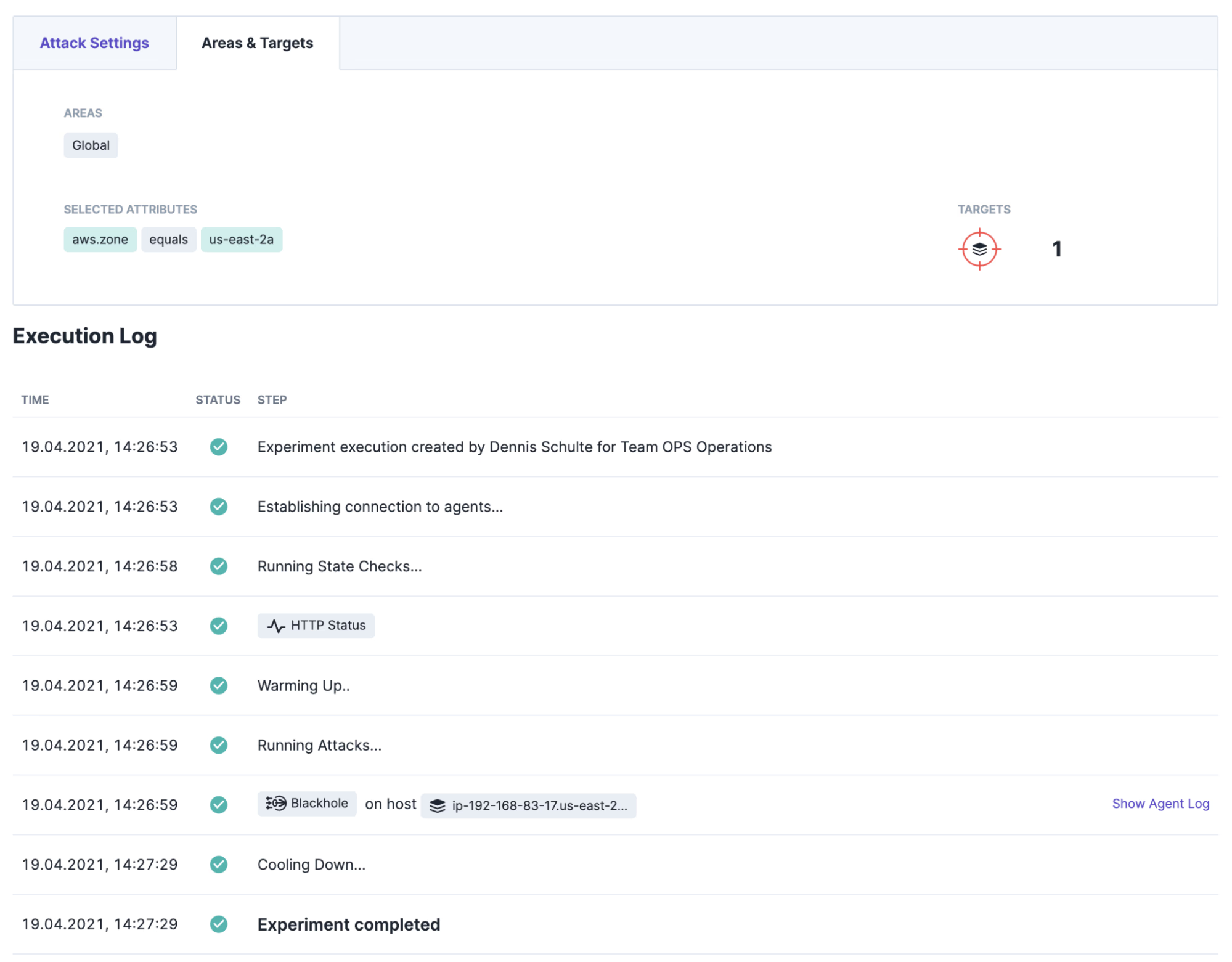

All hosts running in the availability zone “us-east-2a” are targeted. The metadata is automatically detected by steadybit and made available to the user for selection.

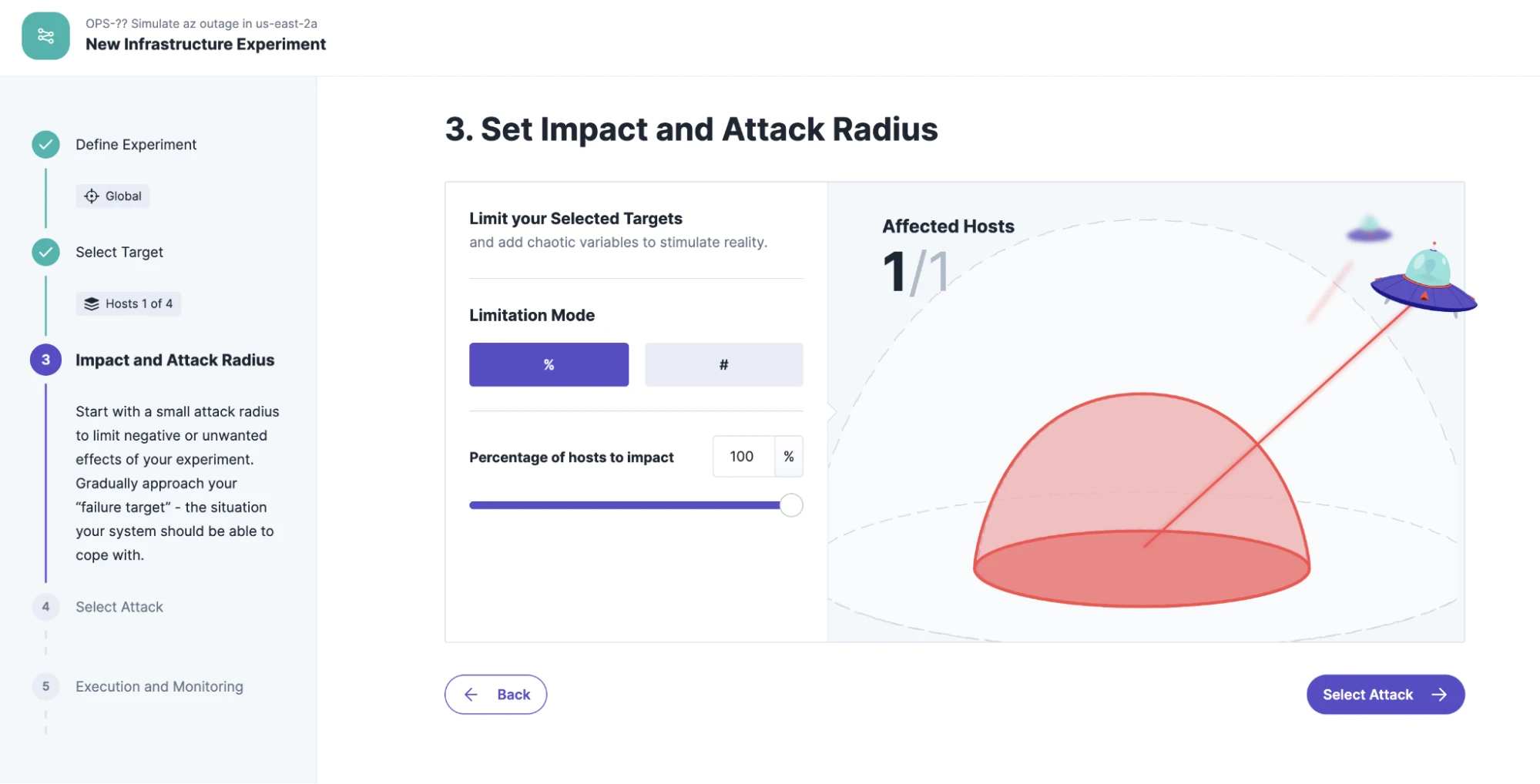

The definition of the blast radius is easy: we attack 100% of the hosts in the entire availability zone us-east-2a.

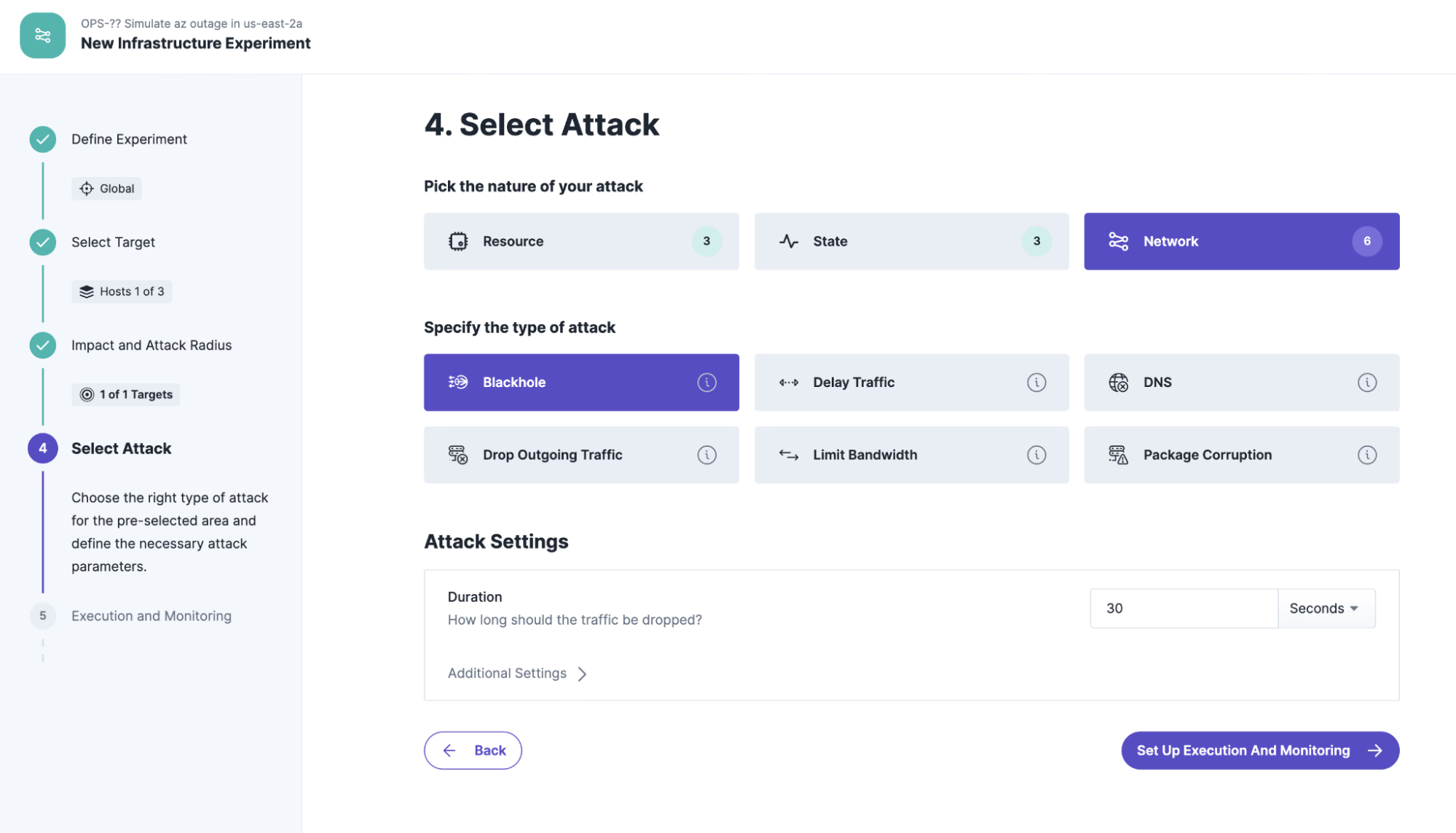

Various attacks can be selected to simulate a specific failure of an availability zone. The most minimally invasive solution is the blackhole attack. This prevents all data traffic to the selected target host in the AZ “us-east-2a” for the duration of the experiment. Alternatively you could choose to shutdown all hosts in the AZ, but then you have to verify that they all are restarted or replaced after the experiment.

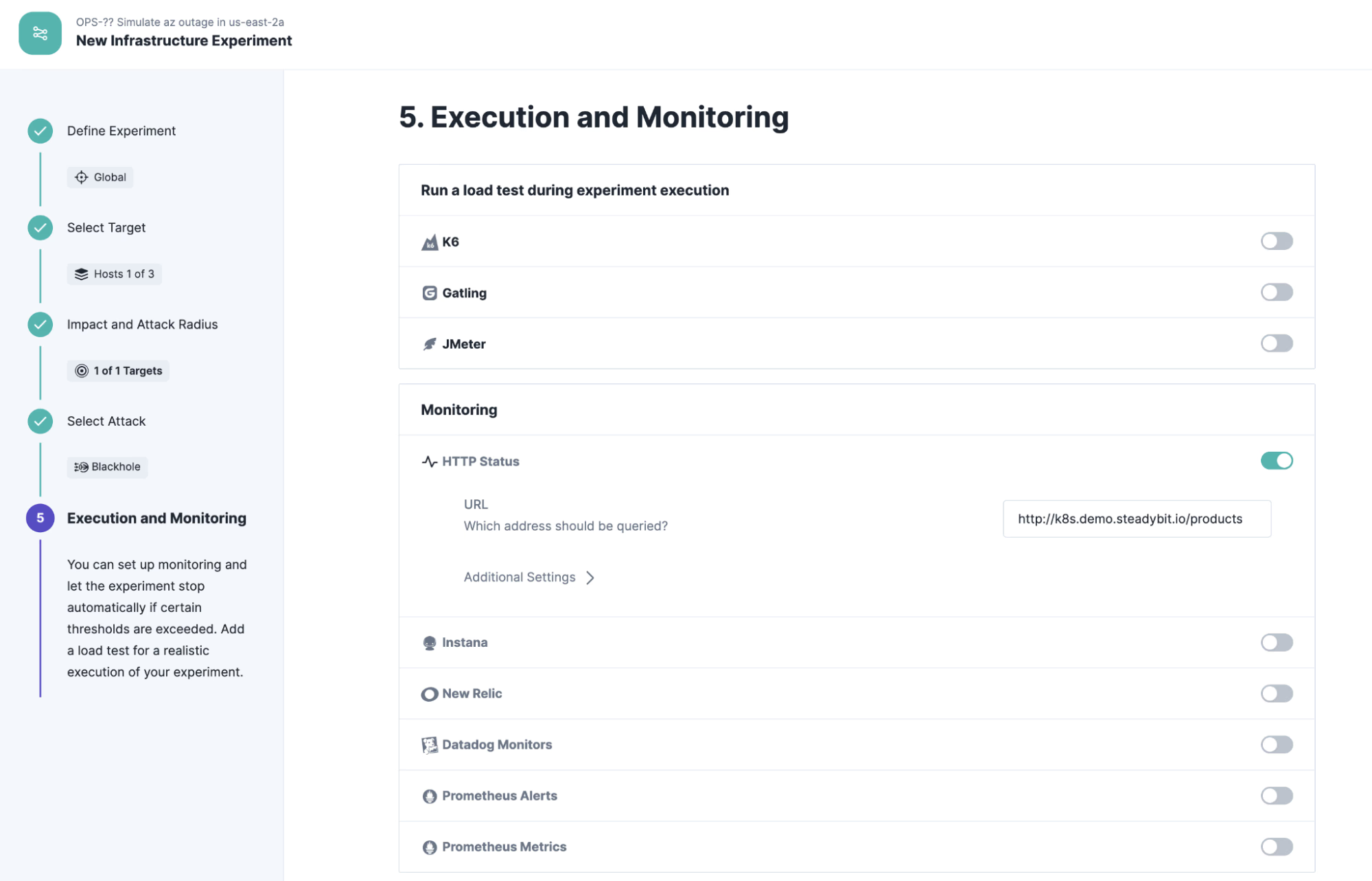

To validate the steady state, we configure a state check that checks whether the hypothesis is consistently met before and during the runtime of the experiment. For this simple example, we select the “HTTP State” check, which continuously calls the configured endpoint and validates the result. Please replace the endpoint with your own cluster endpoint, e.g. “http://{hostname:port}/products”.

Run Experiment

Running the experiment yields a successful result. Kubernetes detects the failure of one node and routes all HTTP requests to the remaining functional node.

Conclusion

In this article, we looked at how availability zones help ensure the availability of services running in AWS. As a cross-check, you could now let both nodes fail and see what happens. Of course, it is recommended to run further experiments to cover as many scenarios as feasible that could affect the availability of the systems. What are your experiences to improve the availability of your AWS services?

Get started today

Full access to the Steadybit Chaos Engineering platform.

Available as SaaS and On-Premises!

or sign up with

Book a Demo

Let us guide you through a personalized demo to kick-start your Chaos Engineering efforts and build more reliable systems for your business!